Originally published at: Tracking My iPhone Camera Usage With a Deep Dive into EXIF Data - TidBITS

I “downgraded” from the iPhone 16 Pro to the iPhone 17 this year because there was only one unique feature of the iPhone 17 Pro that interested me: the 4x/8x zoom courtesy of its Telephoto camera. In the end, I decided that it was more important for me to experience the plain iPhone 17. I don’t think that I regularly used the iPhone 16 Pro’s 5x zoom, courtesy of its Telephoto camera and tetraprism lens, because I’m more interested in macro photography. The photos that make me the happiest are the extreme close-ups I get by stuffing my iPhone into a flower to capture the alien-looking stamens and pistils surrounded by the glorious colors of the petals.

While making that decision, I wondered how many photos I’d taken in the last year with the iPhone 16 Pro’s 5x zoom. I poked briefly at smart albums in Photos but couldn’t figure it out at the time, so I proceeded with the move to the iPhone 17 without that data.

However, while chatting with Allison Sheridan about our next Chit Chat Across the Pond podcast topic, I commented that I had downgraded because I didn’t think I used the Telephoto camera much and felt that the iPhone 17’s new 48-megapixel Ultra Wide camera would do as well as the iPhone 16 Pro’s for macro shots. She said that she loved her iPhone 16 Pro’s 5x zoom and would give up the Ultra Wide camera and its macro photos in a heartbeat for the more capable optical zoom. That’s when I mentioned my failed smart album efforts, with which she had also struggled.

And then I went down the rabbit hole, emerging a day later with an interesting podcast discussion and this article.

ChatGPT and ExifTool

Part of the reason I had given up on using a smart album in Photos is that, after my initial experiments proved fruitless, I asked ChatGPT, and it was negative about that approach. Instead, it recommended Phil Harvey’s free ExifTool, a command-line tool for reading, writing, and editing metadata in numerous formats, including the EXIF format, which documents various camera-specific bits of metadata about every iPhone photo.

Although ExifTool is a little intimidating to install (it’s not signed, so macOS tries hard to prevent you from installing it for fear that it’s malware), it’s easy enough to use when ChatGPT provides commands to copy and paste.

After some back and forth, I discovered that part of my confusion with Photos is that it identifies the images taken with the iPhone 16 Pro’s Telephoto camera as having a 35mm-equivalent focal length of 120 mm, whereas ExifTool revealed that the actual focal length, as shown by the EXIF FocalLength field, was 15.7 mm. Similarly, the Wide camera reports a 35mm-equivalent focal length of 24 mm, but the actual focal length is 6.8 mm. The Ultra Wide camera reports a 35mm-equivalent focal length of 14 mm, corresponding to a true focal length of 2.2 mm.

After some back and forth, I discovered that part of my confusion with Photos is that it identifies the images taken with the iPhone 16 Pro’s Telephoto camera as having a 35mm-equivalent focal length of 120 mm, whereas ExifTool revealed that the actual focal length, as shown by the EXIF FocalLength field, was 15.7 mm. Similarly, the Wide camera reports a 35mm-equivalent focal length of 24 mm, but the actual focal length is 6.8 mm. The Ultra Wide camera reports a 35mm-equivalent focal length of 14 mm, corresponding to a true focal length of 2.2 mm.

It makes sense that Photos would report the 35mm-equivalent focal length that photographers understand, even though the actual focal lengths are much smaller, as they must fit within the iPhone’s frame. However, when you’re building a smart album in Photos, it only knows about the actual focal lengths, not the 35mm equivalents. But I didn’t discover that until much later.

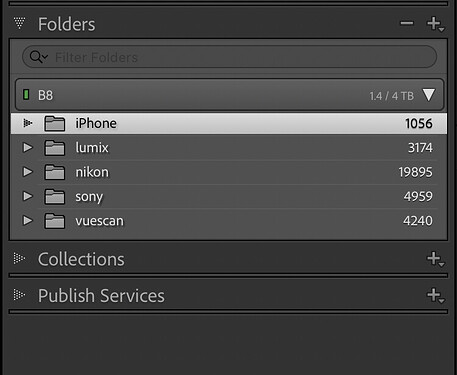

Once I could use ChatGPT to build complex ExifTool commands, I tasked it with counting the number of images from the last year that were taken with each of the three cameras. Here’s where the rabbit hole took a sharp turn downward, because I have nearly 49,000 images in my Photos library, and ExifTool isn’t quick at parsing them.

However, ChatGPT, ever helpful, offered to write a shell script that would accomplish what I wanted using techniques that would speed things up. In particular, it suggested finding all the images from the last year using the find tool, so ExifTool could examine the metadata from that subset rather than the full collection. And it did! It required a good bit of back and forth to get it working the way I wanted since I’m barely functional with shell scripting, but chatbots are nothing if not patient.

I shared the script with Allison, who was able to get it working after granting Full Disk Access permissions to Terminal. But as we pored over our respective numbers on the podcast, it became clear that they were, if not wrong, at least misleading. And probably wrong. Part of the issue was that the iPhone 16 Pro’s Wide camera can be used for regular shots and 2x zoom photos, and the Ultra Wide camera can be used for wide-angle 0.5x zoom pictures and macro close-ups. Plus, there were selfies that used the front camera, and a surprising number of photos—for both of us—that weren’t taken with the iPhone 16 Pro at all. Those came from iPads, old iPhones, and other people who had shared photos with us. Luckily, ExifTool can extract the appropriate focal lengths to identify each type of photo.

Verification was clearly necessary, so I came up with the idea of creating folders for the different types of photos and populating them with symlinks to all the matched photos. I figured it would be easy to use Quick Look to scan through a folder of symlinked images and see which ones didn’t belong. To keep the test runs quick and the scanning manageable, I also added date handling so the script could limit its work to a specified period. ChatGPT had no problem modifying the script as I directed, though, as always, quite a bit of back and forth was necessary to work through mistakes and incorrect assumptions.

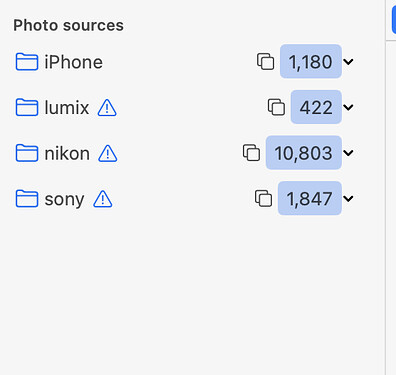

The folders were brilliant for revealing errors in the script. The most notable finding was that the script identified over 10,000 photos, which was significantly more than I had taken in the last year. The problem is that the find tool was looking at the last modified date for the original images, and Photos apparently tweaks that date after touching old images in some way. However, ExifTool can read the actual capture date. When ChatGPT rewrote the script to use find for the first pass and then have ExifTool identify only the photos from the last year, the total number dropped to about 1,500. In the end, this is what my script reported.

Interestingly, one photo was still completely wrong. For reasons I can’t explain, it somehow ended up being identified with the EXIF Model being “iPhone 7” while the LensModel was “iPhone 16 Pro back triple camera 2.22mm f/2.2.” Metadata corruption?

What About Those Telephoto Pictures?

As you can see in the screenshot above, I had 386 photos that relied on the Telephoto camera’s 5x zoom, but only 265 true macro photos from the Ultra Wide camera. Does that mean I made a mistake in not getting the iPhone 17 Pro?

No, and here’s where my folders were useful again. For me, Telephoto pictures fall into two categories: photos I (or a friend) take at my team’s cross country races and wildlife shots where I couldn’t get any closer to the animal in question. Unfortunately, every model of iPhone has always sucked at taking race photos. I need the zoom so I can fill the frame with the runners (and I usually crop the images even more afterward to focus on the people I care about), but even when it’s a sunny day, the shutter speed isn’t fast enough to capture images without some motion blurring. (I use the Camera+ app in its Action mode to take bursts at 2x zoom; the standard Camera app makes that too hard in stressful situations.)

These photos of Tonya were actually taken in 2023 with the iPhone 15 Pro, since the pictures of her in 2024 using the iPhone 16 Pro were less flattering. But you can still see how blurry they are, particularly the one on the right.

These photos of Tonya were actually taken in 2023 with the iPhone 15 Pro, since the pictures of her in 2024 using the iPhone 16 Pro were less flattering. But you can still see how blurry they are, particularly the one on the right.

So, although I have taken a lot of race photos, and they’re better than nothing, I’m always disappointed by how much better they could have been. My wildlife photos are also often disappointingly blurry due to motion from the animal or the camera.

The Script Versus Smart Albums

I’m uncertain how generally useful my script is, since it’s focused on identifying images from the iPhone 16 Pro’s different cameras, and only secondarily counts photos taken with other devices. Nevertheless, you’re welcome to download it if you’re in a situation similar to mine or want to modify it for your own use. Don’t worry if you aren’t a shell scripting expert, because any chatbot should be able to help you install ExifTool, make the script executable, and adjust it for your iPhone model.

Alternatively, armed with the knowledge I gained from working with ChatGPT on the script, you may be able to learn everything you need by creating smart albums in Photos instead of fiddling with the command line. This approach can collect all the photos taken with each of the iPhone 16 Pro’s cameras, but I was unable to extend it to differentiate between regular and macro shots taken with the Ultra Wide camera and regular and 2x zoom shots from the Wide camera.

The key, which I alluded to earlier, is to use the Lens condition to match the actual focal length of each camera rather than the 35mm-equivalent focal length that appears in the Photos interface. That’s more easily said than done, although you don’t have to know the specific text to match. If you start typing in the Lens condition’s field, an auto-complete pop-up with many possible options appears.

Unfortunately, it isn’t wide enough to display the full name of many of the options, so you have to hover over those to see the expanded name, which has more significant digits than what ExifTool reports for FocalLength. Even more confusing, you may see separate entries for “iPhone 16 Pro back camera” and “iPhone 16 Pro back triple camera.” The “back camera” term matches videos and, in my case, photos taken with the Camera+ app in its 2x zoom mode. The “back triple camera” term matches photos where I explicitly used the 5x zoom.

The practical upshot of all this is that you may need to match the Camera Model separately and then match just the focal length for the Lens condition—select the desired autocomplete entry and then delete the camera-specific text. My completed smart album for finding photos captured with the Telephoto camera looks like this, and you can easily modify it to pull out photos captured with the Wide and Ultra Wide cameras.

If you want to be complete and see how many selfies you’ve taken, that’s easier—just use the Photo is Selfie condition. Both the iPhone 17 and iPhone 17 Pro have Apple’s new 18-megapixel Center Stage camera for selfies, so those should improve regardless of which you choose.

What you can’t do in a smart album is identify the actual focal lengths used by different photos using the same lens. In contrast, my script can differentiate between regular photos taken with the Wide camera and those that use the 2x zoom, as well as distinguish between wide-angle images taken with the Ultra Wide lens and those that are macro shots. My belief is that Photos is looking only at the EXIF FocalLength field and ignoring the FocalLengthIn35mmFormat field used in the script. In an ideal world, Apple would give Photos smart albums an EXIF condition that would allow users to build complex rules matching any available EXIF fields.

Ultimately, I’m not sad to have opted for a less-expensive iPhone 17 this year, and I’m curious to see whether I miss the Telephoto camera throughout the coming year. If you’re trying to decide between the iPhone 17 and iPhone 17 Pro when upgrading from a previous iPhone Pro model with a Telephoto camera, create a smart album with a Lens condition that matches the actual focal length of your 3x or 5x zoom photos, and then look at the images and ask yourself, “Do they make me happy?”