I’ve been taking pictures of cross-country and trail races this fall, first with my iPhone X and now with the iPhone 11 Pro. And frankly, I’m unimpressed. Let’s assume for the moment that the problems are my fault and if I only knew how to do something better, the photos would come out better. Here’s what I do.

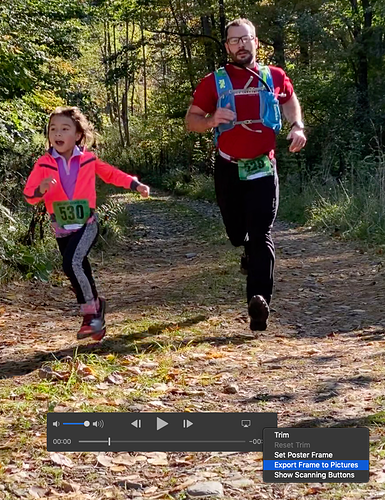

First, since the runners are moving fast and I neither want to affect their race or potentially get run over myself, I pick what seems like a safe distance and use the 2x optical zoom. The iPhone is handheld, and light is generally pretty good, though I have no real control over that, and when it’s a little darker or a cloud goes over, the quality suffers. On the iPhone X, I always used burst mode to capture multiple photos. With the iPhone 11 Pro, the new press-and-slide-left trick for getting burst mode is hard to use in the moment, so I mostly just took single shots. (And I lost one great shot of a father and daughter running into the finish together because of ending up in video mode—amazingly, there’s no way to extract a still image from a video using built-in tools, so I was forced to take a screenshot of the video.)

Once I’m home, I go through the burst mode shots and pick the best one, often choosing based on stride form, facial expression, or race position (who’s behind, etc). Then I apply the Auto adjustment, which usually improves things a little, and crop the photo, sometimes heavily. The cropping is necessary because even with 2x zoom, I can’t get nearly as close as would be ideal. It’s also necessary to focus on the primary runner (such as the person on my team, as opposed to others in the race) and to set up some minor bit of narrative (have them in front of someone else instead of showing that they’re behind another person, or having them coming out of a trail with a group well behind, etc).

But thanks to the fact that they’re moving fast, the camera has trouble focusing on them, and when I zoom and crop, any blur becomes more pronounced. I’ll see how the iPhone 11 Pro works in tomorrow’s PGXC race, but if I had to say based on today’s trail race, it’s better at avoiding blur than the iPhone X, but still ends up with really grainy photos.

PGXC Race #1 (iPhone X): https://photos.app.goo.gl/Tn6oZU6fDY9NUxVLA

PGXC Race #2 (iPhone X): https://photos.app.goo.gl/2FCC6NgypT3cPPHXA

Danby Down & Dirty (iPhone 11 Pro): https://photos.app.goo.gl/TuoNAQaZ4ZrFPXoS7

The main thing I’m going to try differently tomorrow is using a monopod, assuming the tripod mount that can expand large enough to hold an iPhone 11 Pro arrived from Amazon today (the previous one I had maxed out with the iPhone 5-size phones, something I didn’t realize until I tried it the other day). That will reduce stabilization problems, but may be too clumsy for real-world usage at a race.

Any other suggestions for improving these action shots? Thanks!