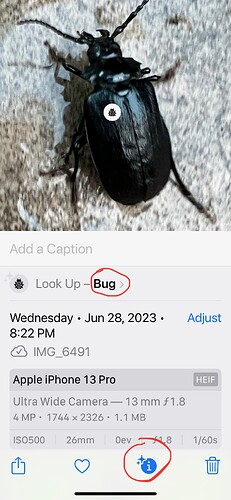

I’m not even sure it’s a “bug”. It’s a beetle which is an insect. Maybe they could get an API to iNaturalist to ID these better :-)

As a non-scientific term, “bug” covers most arthropods, both insects and non-insects. If you want a scientific identification, only a very specialized object-recognition system, or a human expert, is going to reliably help with that. I would not consider this a failure of object recognition.

I should be more clear. I’m not disappointed because the use of the term is not scientifically correct. That was meant as side note. I’m disappointed because “bug” is a grossly broad classification of what is shown in the photo. I was embarrassed for Apple just to have seen that identification. A one-year old would point to that picture and say “bug”. Now, in 2023, with GPT-4 helping us solve world hunger, it’s good to know Apple has finally figured out how to identify “bug” :-)

For example, while the community is still trying to agree on the specific ID for this photo, it seems pretty clear that it’s a “beetle”. Apple could have also figured that out.

But hey, it’s better than nothing.

I will add something else, however.

I have been adding keywords to my nature shots for many years. I may tag “Butterfly” but then also “Insect” so that I can query on Insect later and also pick up things like beetles, bees, etc.

It would be nice, therefore, if Apple’s recognition categories were also hierarchical, so I could query on “Animal” and have it pull up all of this, plus my Dog (which iOS 17 promises to identify).

Likewise, it would be nice to query my library for “plants” and have it find flowers, trees, etc.

Organism ID is pretty specialized compared to ‘normal’ human perception, and as more is known, it gets fussier. Even Darwin often called spiders insects. I’d rather have them give a common language generic classification than something truly wrong. At least ‘bug’ for a beetle is better than calling a samoyed I once had a cat.

Apple may eventually get into more specialize classification, but non-vertebrates other than butterflies and dragonflies are sadly a niche interest, despite how amazing and crucially essential the critters are.

Do you have the Seek app? It’s iNaturalist’s attempt to ID things with the phone camera based on ML from IDs on their site. It’s good and lots of fun, but there are a lot of what to me is an obviously easy to ID plant that never gets better than ‘Dicot’. And a fair number of the times it does get to species, it’s flat out wrong–not just species, but wrong family or worse. There’s no chance that a junco in a Seattle park is an Australian willie wag tail, bamboo is not a willow, and that leaf wasn’t a butterfly… But it’s quite useful when seasoned with salt, and has been helpful for mosses, fungi, lichens and slime molds even when it can’t get to species (some fungi and lichens can only be fully IDed by chemical or DNA analysis). Sometimes I can get Seek to ID something in a photo, but usually not small things such as multi-legged critters. Trying via the microscope was not unexpectedly a non starter.

Merlin Bird ID is another very useful ML ID app. I haven’t tried it yet for visual ID, but it also has sound ID, and the interface is good. It keeps a database, and as it listens, it not only lists the birds, but after you stop recording you can select the bird and it will play what it recorded for the bird, or select part of the waveform and it will highlight the bird name in the list. It’s not always right, but it’s been really useful to ID what I record from the backyard morning chorus. I can either play bits of the recording to it, or import a sound file and it will ID everything it finds. You can also export the sound files, or use iMazing to copy the whole sqlite database. It does have one huge flaw–if it’s open in the foreground, the phone won’t auto-sleep, which has run my battery to zero a few times. A lesser problem is that it insists on precise location, and if you set a general location by hand, it doesn’t stick.

Looks like a bug to me…

Thanks. Lots of juicy feedback in your reply.

No, so I read up a little on it. This is a few years old, but I’m guessing still true:

Sounds like it’s more streamlined for getting IDs, and it favors getting a real-time response over getting one that is curated by the iNaturalist community. I have had a number of iNat posts over the years that got corrected by human experts.

Yea, this is fantastic. Literally every person should have this app installed :-) I think if everyone ran Merlin Sound ID every day, the world would be a much better place. It brings so much happiness.

If Merlin has an API, that would be a nice hook for Apple Photos to consume.

My observation is that after 12 minutes of Sound ID, it asks you if you want to continue. Doesn’t that protect your battery?

Yea, I’m probably more interested in nature than most. And it would be out of scope for Photos to try to replace the more sophisticated ID apps. Is “bug” as a generic classification for all invertebrates the place to draw that line? Not sure. And I’m sure the line will move over time.

But iOS 17 will purportedly ID specific foods and provide recipe links. That seems much harder to be precise or useful. If I show it a picture of brown mush on my plate over rice, will it ID that as the delicious Lamb Korma? Flavor isn’t visual; the chance its recipe will taste like what you’re eating is quite low. “Beetle” would appear to be 100x easier problem to solve accurately.

Does it still only say bug when you tap on Bug> ? If I try to identify a flower, it only says flower until I tap on Flower> then it identifies it to be a specific flower or gives a list of flowers it thinks it might be.

Good catch! It does improve. So I guess Apple has some logic that does an initial classification, and then it uses that to probably invoke a secondary lookup, likely in a special purpose API, for more detail?

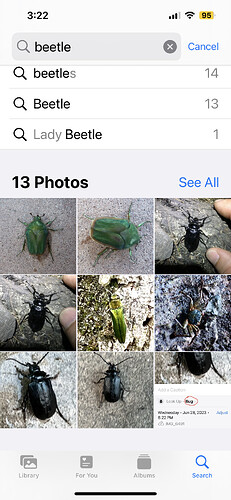

How that integrates with search is still unclear to me. “Bug” does find this beetle, and so does “beetle” (no I haven’t added any metadata to those pix like keyboards or captions). But “borer”, part of Siri’s guess, returns none of these beetle pix.

Plus, a search for “insect” does include this beetle (along with about 700 other pix in my library, including butterflies, moths, wasps, caterpillars, a walking stick, and a hermit crab ![]() )

)

So this is better then I thought.

Seek vs. iNaturalist app:

I hadn’t tried the iNaturalist app, since I assumed it’s basically an interface to the web site. I downloaded it and took a quick look, and that seems to be true, though you can use it without an account. [But if you don’t log in, every time you launch it you have to swipe though 6 pages to get to the skip sign in button. It also uses a very small font size for almost everything, and ignores accessibility settings.] To get an ID you have to upload a photo either from the camera or the photo app, and that joins their database. I like the maps of what’s been spotted where, and the field guides would be quite nice if there were a lot more of them. Only one is at all local to me. I was also pleased to see a microscopy project. On the whole, I wouldn’t want to turn a young kid loose on it because it’s a bit grabby of data since it is citizen science and the contributions are the point. For older beginners or the casual, it’s something of an investment to use. But I probably won’t use the app much just because of the font size issue.

Seek is real time and gamified, virtually no learning curve. Still no accessibility compliance, but the fonts used are bigger and much more readable. It works on device, doesn’t track or collect locations (it checks once to get a non-precise general location), doesn’t upload photos, and is otherwise much easier to use for beginners (a couple of friends) and the more or less superficial (me). There’s an option to link it to an iNaturalist account if you want to. One small irritation is that you can’t use a bluetooth shutter button with it.

Fortunately they’re both free so there’s no reason not to have both.

I do have an iNaturalist account, but rarely use it. For bugs, I’m more likely to ask at bugguide.net (insects, arachnids, and 'pedes):

Merlin battery drain:

Maybe it asks if the recording is active, but I always explicitly stop the recording. If I’m around the house I’m likely to put the phone down and wander off. By the time I notice, battery is low or dead. If it ever asks from that screen, I don’t see it and it doesn’t persist. There’s nothing in their settings to control it.

ML foods:

Apple will happily cater to all of the people in our strongly navel-gazing culture who will be thrilled to pretend that a bad photo will tell them in great detail about the components and nutrition of a plate of food. So much more me-affirming than noticing that a vast universe exists outside of their collective navels. Not that belly buttons are completely boring, but the navel-gazers will never manage to learn that.

I downloaded Seek (not to be confused with Seek Thermal).

I was initially dubious because it promptly tries to “upsell” you on iNaturalist as being more accurate by being location based:

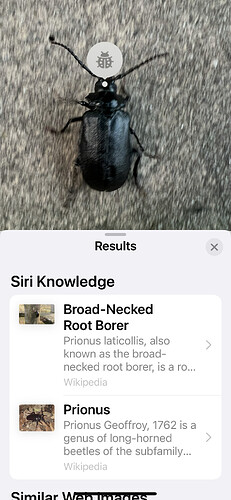

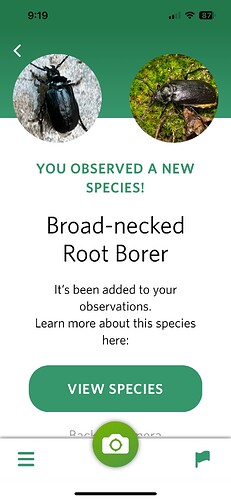

Still, I uploaded that same beetle photo, and in <1s I got an ID that matches the best-guess I have so far from the iNat community:

Is Seek’s algorithm so much faster and intelligent? Or is it being confident without having solid data? I mean, I think location is supposed to help with accuracy, as the screen shot above also suggests. But it makes sense to me that a truly intelligent system shouldn’t need to be told the location to figure out what it’s looking at.

So, I’m still curious about the differences happening under the hood here, but I’m definitely sold on trying it out! Thanks!

I guess I lost sight of the wanting keywords for photos part, and went straight for ML identification. If you uploaded a photo within Seek, that’s just a back channel to inaturalist, and that isn’t what Seek does. Seek encourages you spend more time looking at the real world.

Take Seek out for a walk and aim it at live things. It will (often) tell you what they are (and is right more than wrong for me). If it doesn’t make it species, it will at least show a higher classification that can help you look it up. It will save a photo, but they aren’t often good ones, partly because it uses video mode, and partly because it gives you the last? frame available when it decided on an ID. (The model is trained on photos submitted to inaturalist, and since a lot of those aren’t very good, it does some spiffy IDing from a not-good photo.) They’re basically for your reference. It keeps track of the things you identify, you go up levels, and there are challenges to encourage ranging farther afield or looking for specific things such as plant eating bugs.

For ML to produce accurate results (as opposed to the frequent hallucinations of “AI” aka artificial parroting), it needs to know as much context as possible so it can discard impossibilities/improbabilities for a given situation.

Location, date, and time of sighting and even weather conditions can be extremely important for organism IDs. As an over-broad example, many moths look almost exactly the same. But maybe one species flies in the spring, and one in autumn, or one at dawn, one at dusk. Maybe one lives in eastern US and several in the Rockies. They may or may not be closely related. More specific: The Americas have cacti, Africa has euphorbias. They are very similar in appearance, but not at all closely related; the similarity is due to convergent evolution. If you see a bee out and about when it’s fairly cool (below about 65F), it’s a bumblebee because bumblebees can choose to be warm blooded when necessary. Other bees (most insects) need enough warmth from the air to get their muscles working. The way people have transported species around complicates things, but a fair bit is known about which species have been moved where. Citizen science like iNaturalist and some of the Zooniverse projects have helped improved that knowledge.

There’s a marvelous book you’d probably like, Rob Dunn’s “Every Living Thing”, the story of how ‘we’ gradually learned how much diversity of life exists, and how we’ve tried to organize that knowledge. It should be readily available from good libraries as print or epub.

https://www.powells.com/book/every-living-thing-9780061430312

That does sound fascinating :-)

Harder to be precise? Perhaps. Harder to be useful? Hardly. Even rough identification of what recipes might lead to a particular food will be far more useful to the average person than a perfect taxonomic identification of a beetle. Only a relatively small percentage of people care much about nature (sad, but true), but everybody has to eat.

Well, but you don’t have to look up gourmet recipes online to “eat”.

But I will partly agree with you. It would certainly be useful to get a recipe to make what I’m currently eating and finding delicious. But precision will be tough since you can’t see taste. And I won’t know that I want the recipe to something until after I’ve started eating it, at which point the “image” will be less recognizable to the AI. And do I have to disassemble my sandwich so Photos can see all the ingredients inside so it can even get 25% close to giving me a recipe that matches that awesome creation in front of me?

So yea, it would be useful. But until my iPhone can smell, taste, and use X-ray vision, I think this is a fool’s errand. Chocolate chip cookie recipes will be easy. But who starts their interest in finding such a recipe by looking at a photo they took?

So for recipes, it seems to me that the problem they can solve is not useful, and what would be awesome they have no chance of providing.

Sorry, what’s ML?

Colleen, I think “ML” is used in this thread to stand for Machine Learning.

Yes. It’s a much better term than “AI”, because this software has no “intelligence” as we colloquially understand the term. The software doesn’t know what it is seeing.

Image recognition software picks an answer from a database of possibilities based on probabilities. The term “machine learning” is meant to point out that the internal data structures that encode these probabilities are generated by “training” a neural net by showing it millions of images, along with metadata identifying the object(s) in those images and letting the training software configure the neural net’s parameters based on that training data.

But ultimately, despite producing good results, the software still doesn’t know what the object is - it is simply reporting what name has the highest probability of being correct based on the results of the training. In other words, it can say that there’s (for example), an 85% chance that the image is a bicycle, and it might be able to draw a box around the pixels most likely to contain that bicycle, but it would have no clue about what a bicycle is used for. An app using the ML software could tell you, but only by conducting a web search for related terms, not because of any actual understanding.

Hence the reason why ML is a good term and AI is not.

Great feedback.

But isn’t that how all intelligence works? My brain does no better than what you describe here…

But I know we’re veering off topic a bit…

Thanks, Apple! iOS 17 fixes this. The identification pipeline shifted forward a notch.

Before, the (i) icon at the bottom just got a generic star to indicate “something” was identified. Now we can see it’s a bird.

Before, when tapping the (i), it would say “look up bird”, and then only after probably hitting some API would it actually ID the bird. Now, you can see we are told the species right away.

Nice work!