Following the sentiment expressed in your post, here are two other ChatGPT rewrites that you may find more accessible:

Winston Churchill

It is my firm belief that Masley’s insightful posts on Substack illuminate the formidable challenges that humanity faces when confronted with complex issues, particularly when one clings tenaciously to strong, emotion-driven opinions. Furthermore, we must acknowledge the growing hostility towards expertise that has taken root in our society over the past decade—a hostility that stands as a colossal barrier to the exercise of critical thinking.

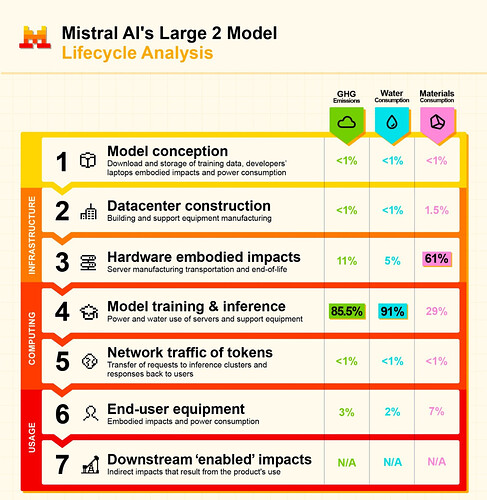

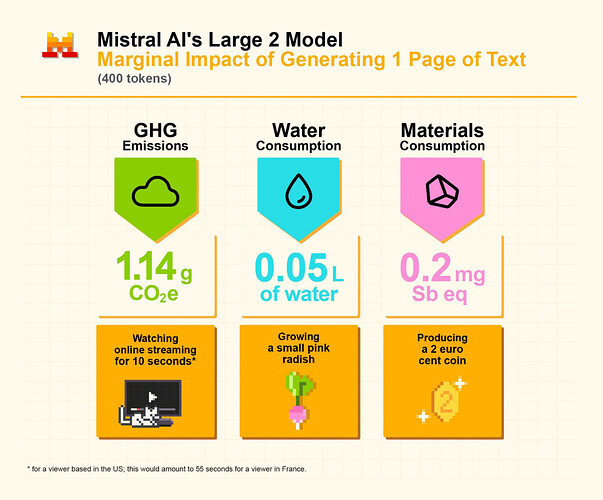

In the realm of generative AI, we find ourselves beset by a confluence of anti-technology sentiments, aversions to business, and a troubling lack of numerical literacy. This has given rise to the alarming rhetoric that “ChatGPT is wreaking havoc upon our environment.” Moreover, we observe a troubling tendency among many to focus solely on isolated aspects of intricate systems—be it the production and use of glass and metal water bottles or the complex logistics of Amazon. Such narrow perspectives may yield emotionally compelling arguments, yet they often result in actions that are, at best, marginally effective. Let us strive for a broader understanding, for it is only through comprehensive insight that we may navigate the challenges before us.

Quentin Tarantino

INT. COFFEE SHOP - DAY

The camera zooms in on a table where two friends, JACK and LUCY, sit sipping their coffee. JACK leans in, animated, as he talks about Masley’s Substack posts.

JACK

(leaning forward, intense)

You know what Masley’s been laying down on Substack? It’s like a spotlight on the mess we humans make when we tackle complex issues. I mean, when you’ve got a strong opinion fueled by emotion, it’s like trying to drive a car with no brakes. You just crash.

LUCY nods, intrigued, her eyes wide.

LUCY

Yeah, but what about the hostility? It’s like a plague.

JACK

Exactly! This hostility towards expertise that’s been brewing over the last decade in the U.S.? It’s a massive roadblock to clear thinking. Like, come on! We need experts, not just loud voices.

He takes a sip of his coffee, then leans back, crossing his arms.

JACK

And let’s talk about generative AI. It’s a cocktail of anti-tech vibes, anti-business rants, and a whole lot of people who can’t count. You hear the chatter? “ChatGPT is destroying the environment!” It’s like a bad movie line.

LUCY chuckles, shaking her head.

LUCY

Right? It’s all surface-level drama.

JACK

(gesturing wildly)

Exactly! People only see one piece of the puzzle. They’re fixated on glass and metal water bottles or Amazon’s logistics like it’s the whole story. But it’s not! It’s a tangled web, and they’re just pulling at one thread.

He leans in closer, eyes narrowing.

JACK

And those calls to action? They sound good, but they’re like a flashy car with no engine. Emotionally appealing but barely effective.

LUCY raises her coffee cup, a smirk on her face.

LUCY

To the tangled web, then.

They clink their cups together, the camera pulling back as they continue their animated discussion, the world around them fading into the background.

FADE OUT.