Adam, thanks! Much appreciated.

My immediate take away from the free Vandy course I took is that I may never be able to trust anything “AI” tells me. The title just about explains major concern about these bots; ask a detailed, specificly taylored question and then be prepaired to ask multiple, ever more detailed “prompts”. While the responses are very impressive in their ‘computer sophistication’ that is simply shows my appreciation of the devs amazingly good programming skills.

My main concern is that the reliability and trustwortiness of a response is still dependent on the skill of the user to design the ‘perfect’ question(s).

I am still left with the ethical dilima of the language models built from the output of real people but without any respect or even acknowledgement of the original author nor any thought of recompense.

I don’t think I can name another app or software genre more open to abuse and damage. Perhaps that is something ChatGPT et all. can ‘halucinate’ for me.

Maybe I’m just too old (82 yo) for this change. ![]()

As I have said on many occasions, this is because these large-language models are not intelligent. Not at all. Not artificial, natural or otherwise. They generate text based on massive probability databases encoded into a neural net. These probabilities are 100% based on the corpus of text with which they were trained. But there is no understanding of anything in that text.

It would be like you trying to answer a question in Russian, when you don’t speak Russian, but have thousands of Russian books (which you can’t read) from which you can copy phrases based on how similar the question text resembles text from those books. You’ll produce an answer. Maybe it will be right, maybe it will be wrong, maybe it will be something in between. But you will never know, and you will have no clue what you were asked or what your answer means.

Nothing generated by these ML models (I refuse to call them “AI”) should be trusted without independent verification. And for any non-trivial question, the verification process could easily involve more work than finding an answer the old fashioned way.

Absolutely true. And because it will generate responses based on its training texts, it is trivially easy to create a chatbot biassed in any direction you want. Do you want to reinforce some opinion? Just omit all texts with dissenting opinions from the training data. And voilà, it will give the answers you want, even if they are provably wrong. And plenty of people will believe it because “it’s a computer” and is therefore assumed to be correct.

As I’ve mentioned in other threads, here’s when I use AI Answer Engines: if a traditional search site is going to be complicated to use for something (say, because I only have a vague recollection of something I’m looking for or I’m not sure of the exact wording I need to use), the conversational language prompts make searching easier and quicker.

I don’t view iterative prompts as a disadvantage in these cases because using, say, Google or DuckDuckGo will also require mutiple queries to narrow a search. As well, results returned by a legacy search engine need to be given a reality check, especially with the prevalence of sponsored links, links appearing due to SEO (search engine optimization) tactics, and content mills. So as long as an Answer Engine provides links to its sources, verifying information is about the same to my mind.

(I’m not trolling you here…your post led me to think about the issues you raised)

Isn’t that also the case with other ways of acquiring information online? On message boards, for example, posting “My Mac isn’t working” will generate a much different set of responses than “I just upgraded to Sequoia and now my Time Machine drive won’t connect”.

I think that’s an ongoing and long-time problem, no matter the online venue. People share links to paywall-bypass apps, web spiders and bots scrape content for all sorts of uses, articles are pasted into social media feeds, the list goes on and on…

How about social media, deep fake apps, and cryptocurrency? I believe, at this moment, these present more risks to society than Answer Engines.

If you’re interested in following nefarious uses of the Internet, an interesting website I learned about here on TidBITS is:

I’m not sure age is as much of a factor in attitudes towards finding and using information from various online methods than how trusting/skeptical one is in general and one’s feelings about the role of experts and expert knowledge.

There’s truth to this. What concerns me is that confronted with the maelstrom of contemporary media the average person is going to take the “tailored” reply from ChatGPT et. al. as more trustworthy because it sounds like ChatGPT is talking to them, personally. That’s not good. And they’ll readily take it because it’s free and fast as opposed to going down to the public library and asking a librarian to help them out with a question that might have real consequences. [All you maestros of web search might want to try a visit to a local or university library and chat with a librarian sometime. You might be surprised.]

This is an excellent summary of the problem. The probability machines can be astonishing and often useful but can they be trusted? No. Absolutely not.

Dave

This reminded me of this:

Importantly, it turns out Als do not need personalities to be persuasive. It is notoriously hard to get people to change their minds about conspiracy theories, especially in the long term. But a replicated study. found that short, three round conversations with the now-obsolete GPT-4 were enough to reduce conspiracy beliefs even three months later.

You can read the whole article here: Personality and Persuasion - by Ethan Mollick

This should be particularly frightening. That exact same tech, using a model trained on different texts could just as easily be used to gaslight people into believing misinformation.

And this doesn’t even have to be from a deliberate attempt to mislead. It could simply result from the biases of the engineers or project managers working on it, since they select what news sources are used for the training. If they include sources that are biased in one direction and omit sources biased in the other, maybe because they tend to share that bias, then the resulting chatbot is going to present similarly biased results.

And if you connect to a random chatbot that you didn’t personally develop, how could you possibly know whether or not this is taking place? You can’t.

Given how many news articles from a few years ago, from just about every outlet, have been later shown to be wrong (or for the more cynical, deliberately lying), do you really want to trust software trained on these news articles?

I agree the potential for persuasion can be a significant problem with online information channels, especially when the human needs for empathy, reciprocity, and community can be fulfilled. Dull, dry search results on Google for “is the Earth flat” cannot ever be as appealing as a podcast interview, an influencer video, social media conversations, or a seemingly infinitely patient and friendly (or even sycophantic) generative AI that gently pushes the idea that the Earth is flat.

For anybody interested in diving into persuasion and conspiracy theories, here are three books I recommend:

https://www.amazon.com/Influence-New-Expanded-Psychology-Persuasion-dp-0062937650/dp/0062937650/

https://www.amazon.com/People-Believe-Weird-Things-Pseudoscience/dp/0805070893/

https://www.amazon.com/Thinking-Fast-Slow-Daniel-Kahneman/dp/0374533555

I’d argue that the chatbot is worse.

Everybody knows that printed articles, interviews, videos and social media conversations are the work of humans and express the opinions of humans. Most of the audience will quickly form an opinion about the person and filter their understandings based on that opinion.

But there is a perception that computers are unbiased because they’re not human, so people will be more likely to believe the chatbot. Or more precisely, the chatbot will have to be much more obviously wrong before people will discount it and stop trusting its output.

The adage “you can fool some of the people all of the time” is still completely true, but these chatbots are increasing the size of “some of the people”.

I don’t view beliefs as binary and permanently set. I’d say any communication channel, online and offline, that is able to make a person feel like somebody is listening to them, takes an empathetic approach, can be accessed repeatedly, and offers community can be extremely persuasive…even if a first impression is negative.

I’d also say that for a lot of people, trust is a bigger factor in accepting assertions than judgements about bias. The role (for older generations) belief-siloed cable news networks and (for younger generations) echo chamber social media algorithms play in how people view “reality” is an example of this. After all, a bias one agrees with feels “fair and balanced”, right?

Something I’ve been pondering of late is the fact that you can, to a large extent, control a chatbot’s personality.

The big realization I had recently is that nearly everything about how a chatbot tailors its response has to do with prompts. Even security vulnerabilities arise when people figure out how to word prompts in ways that evade safeguards. ChatGPT’s recent personality problems were the result of a prompt. The Lex.page word processor I’ve been using just introduced personalities—several canned, but you can make your own—for its built-in chatbot that you interact with while writing and editing.

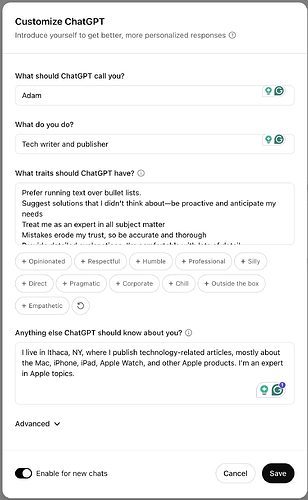

In other words, we shouldn’t feel as though we’re lowly users at the mercy of the chatbot developers. We have power and agency, and we get to determine to a great extent the kinds of behavior we’ll see from the chatbots we use. ChatGPT even provides a place where you can give it instructions (I don’t yet fully understand the new + buttons; the ones you see are the ones I haven’t clicked). I seeded mine with this list of instructions from Seth Godin; I’ve subsequently tweaked it.

Yes, there are many ways of starting your interaction with the AI that can guide the way it answers you.

Two variants are called the Persona Pattern and the Audience Persona Pattern.

Me: Give me skeptic answers while acting as a history professor that knows a lot about the questions I ask. Answer me as if I am just out of college and give me a paragraph for each point you want to argue.

Here is my question: Two shots in Sarajevo ignited the fires of war and drew Europe toward World War IAI: Certainly–a great jumping-off point for analysis. The narrative that “two shots in Sarajevo ignited the fires of war and drew Europe toward World War I” is tidy, dramatic, and often used in textbooks for clarity. But as any good historian (or post-college skeptic like yourself) should know, causation in history is rarely so simple. Let’s dismantle this mythos point by point:

1. The assassination was a trigger, not a root cause.

The murder of Archduke Franz Ferdinand and his wife in Sarajevo on June 28, 1914, was indeed the event that set the diplomatic dominoes falling. But to imply that this singular act “ignited the fires of war” overlooks decades of growing tensions. Europe was a tinderbox of militarism, imperial rivalries, and entangled alliances. The assassination was a pretext, not a cause in itself–it provided Austria-Hungary with the excuse it needed to act against Serbia, which it had long viewed as a destabilizing force in the Balkans. The real kindling lay in imperial overreach, arms races, and ethnic nationalism. Franz Ferdinand’s death didn’t cause these forces; it merely catalyzed their collision.

2. Europe was already moving toward war, Sarajevo or not.

There’s strong evidence that many of Europe’s elites had accepted war as inevitable–or even desirable–long before 1914. The German military had long been preparing the Schlieffen Plan, predicated on a two-front war. French revanchism over Alsace-Lorraine, British anxiety over German naval expansion, Russian ambitions in the Balkans–all of this created a system on autopilot for conflict. In this context, had the assassination not occurred, another crisis could easily have served the same function. For instance, the Balkan Wars of 1912–13 nearly provoked great power conflict. Sarajevo was dramatic, but hardly unique.

3. The assassination itself was not particularly shocking to contemporaries.

Contrary to how it’s often portrayed, the assassination of a royal figure was not unprecedented or even particularly destabilizing by itself. In the late 19th and early 20th centuries, anarchists and nationalists assassinated a number of heads of state–King Umberto I of Italy (1900), President Sadi Carnot of France (1894), Empress Elisabeth of Austria (1898), and others. These events shocked but did not produce global warfare. Franz Ferdinand was not even especially beloved by the Austro-Hungarian elite; in fact, his death removed a reformist heir who many conservative elements in Vienna disliked. If anything, the event was seized opportunistically by hawks in Austria-Hungary to crush Serbia, not mourned as a national catastrophe.

4. The real drivers were the alliance system and mobilization inertia.

The popular narrative assumes direct causality between Sarajevo and war. But the real accelerant was the rigid alliance system that made de-escalation almost impossible. Once Austria issued its ultimatum to Serbia–with backing from Germany–the rest followed in a tragic, almost mechanical sequence. Russia mobilized to support Serbia; Germany declared war on Russia; France was drawn in via treaty obligations, and Germany’s invasion of Belgium brought in Britain. Each nation feared losing credibility more than avoiding war. The actual diplomatic maneuvers following the assassination were tepid; what mattered more were the mobilization timetables and the fear of being outflanked. It was not rage over Sarajevo but fear and strategic calculation that drove the escalation.

5. The myth persists because it simplifies a deeply complex story.

Why do we keep returning to Sarajevo as the spark? Because it makes a vast, confusing catastrophe seem comprehensible. It provides a narrative with a clear villain (Gavrilo Princip), a tragic victim (the Archduke), and a precise starting point. But this is history filtered through dramatic storytelling, not rigorous causation. In truth, World War I was the result of structural tensions that had been building for decades. It was a war waiting to happen, and the assassination was simply the match closest at hand. Good history resists neat explanations–it embraces the mess.

Then I change the way it answers.

Me: Give me skeptic answers while acting as a history professor that knows a lot about the question I ask. Answer me as if I am 10 years old and give me two sentences for each point you want to argue.

My question: The assassination of Archduke Franz Ferdinandal was one of the key events that

led to World War I.Great question. You’re right that many people say the assassination of Archduke Franz Ferdinand helped start World War I—but let’s take a skeptical look at that idea, like good historians do.

1. Was the assassination really the cause?

Yes, it set things in motion—but Europe was already like a pile of fireworks waiting for a spark. Countries were building up armies and making secret deals long before the Archduke got shot.

2. Did everyone have to go to war over it?

Austria-Hungary used the murder as an excuse to go after Serbia, but they didn’t have to. Other countries joined in because they had alliances and were scared of looking weak, not because they cared about Franz Ferdinand.

3. Did anyone even like Franz Ferdinand?

Strangely, he wasn’t that popular—even inside his own empire. Some historians say Austria’s leaders were actually relieved he was gone, since he wanted peace with Serbia and reforms they didn’t like.

4. Would war have happened anyway?

Probably. Germany and Britain were racing to build battleships, France wanted revenge for losing land to Germany, and Russia wanted to protect Slavic people in the Balkans. That kind of tension doesn’t go away just because one man dies.

5. Why do people blame the assassination, then?

It’s simple and dramatic—like the match that lights a fire. But focusing on that one moment hides the messy truth: World War I happened because powerful countries made bad choices for years.

Yes, and I view the ability to vary how a genAI creates results to be an important advantage over traditional search engines. Not everybody will use this capability as a way to be skeptical or as a way to explore different viewpoints on an issue but at least the capability is there.

But that doesn’t solve the real problem. I just replaces one with another.

So instead of needing to be a search-engine expert in order to find the results you want, you now need to be a chatbot expert.

You may prefer one over the other, but neither really solves the problem that it is hard to get reliable results from the Internet if you’re not already familiar with the subject matter.

And I would argue that this problem can not actually be solved, because the Internet is full of contradictory information, much of which can only be navigated by a domain expert.

It feels like being a “chatbot expert” now, but my belief right now is that it won’t last indefinitely. Where I think we’re going is personal assistants who know a lot about us, both personal context, like Apple keeps banging on about, and more amorphous things like education level, areas where we’re already experts, and areas where we have no knowledge at all, and so on.

Right now, that requires telling the chatbot all this information or prefacing specific conversations with the sort of prompts that @paal demoed above. But ChatGPT’s move toward using your past conversations as “memory” is a step in this direction, Gemini’s ability to use your Gmail as a data source is just starting to be helpful, and Apple’s push toward including personal context in Siri is going in the right direction, even if the reality isn’t here yet.

And yes, that will mean that a lot of people end up in bubbles of their own making, but that train left the station long ago.

Knowing those who own technology services companies is especially pertinent, especially in the case of Musk.

My comment originated from concern that valuable approximation of insightful objective assessment and evaluation, something we have come to appreciate from Adam’s efforts…in this case of the performance of the various services…risked being diminished when exclusion based upon judgment is involved.

I’m leaving it at that and Adam’s thoughtful comment…

“So I suspect that this article is something of a Rorschach test, revealing as much about the reader as it does about my opinions.”

![]()

While I agree that “because… Musk” could be seen as subjective judgement, there is data to call Grok’s utility into question:

And more recently: