Thanks for an excellent article, @ace . I’ll recommend it even to non-Apple folks.

A few comments:

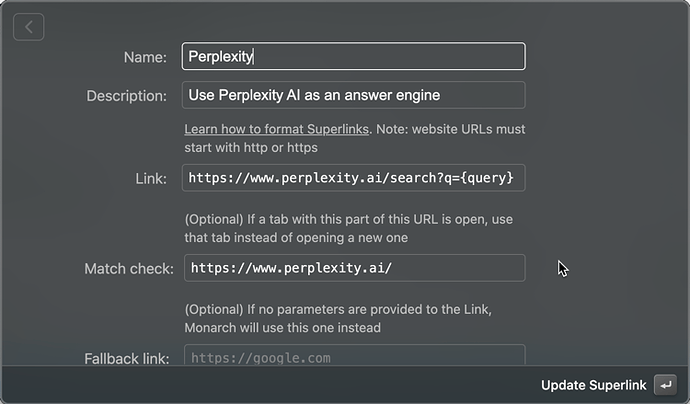

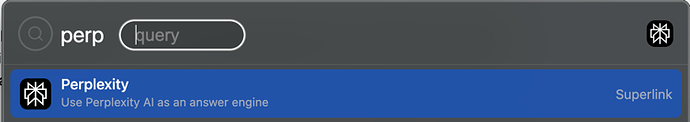

Perplexity is my primary generative AI engine, but I’ve found Grok to be quite easy to use and often to yield very good results. It’s my secondary engine.

I did have a sobering experience with Perplexity in recent weeks that highlights the need for caution when using generative AI. I’ve been working on a project where I’ve needed to generate around a dozen one-page information sheets for a subject about which I have significant first-hand knowledge.

The info sheets needed to be in a very specific format, and they each needed the equivalent of 10-15 bullet points each. Since I was under a lot of time pressure, I asked Perplexity to create a first draft for each topic.

On the positive side, Perplexity generated each document in seconds. Each was perfectly formatted and written to a very high, professional standard of vocabulary and grammar. Further, all were “directionally correct” in the sense that a non-expert of reasonable intelligence could be expected to understand them and, if asked about them a day or two later, could say a few things about each that, at a high enough level, matched reality.

On the negative side:

- Perhaps 10% of the bullet points were fully incorrect.

- Around a quarter of them overstated a fact or missed a subtlety in ways that that would have seemed fine to a casual reader but would have damaged the work’s credibility if read by someone already working in the field.

- Another third of the points were reasonable, but overly generic.

Unfortunately, I can’t share the specifics, but I can give an example of what would have been an embarrassing error if not caught:

- Perplexity said that Person A made a very specific, important contribution to the field.

- I knew that it actually was Person B, not Person A, who made the contribution.

- Following the link to the source material, I saw it was an article entirely about Person B. Person A appeared once in the article, commenting on the importance of Person B’s contribution, but not explicitly mentioning Person B by name.

- From context, it obviously was Person B’s accomplishment, but the AI completely missed the context and attributed it Person A, presumably because of the proximity of Person A to the accomplishment in the text.

If I hadn’t have caught the error, it would have been bad for a couple of reasons:

- Both Person A and Person B would have been in the room when the results were presented, so an error would have fatally damaged the presenter’s credibility.

- The info sheets may eventually be published, so any uncorrected errors would have become misleading source material for future AI analyses.

The sobering part is that the results were so well presented by Perplexity that I think a lot of people with casual knowledge of the subject would have been comfortable forwarding them as official documents with only minor corrections.

Overall, Perplexity saved me some time, especially since it provided links to source material that helped me to “fact check” easily, but it did take some real time and effort.

Bottom line: if the material matters, and especially if it is going to be shared on the Internet, DON’T SKIMP ON THE FACT CHECKING.